DeepSeek: Conditional Memory via Scalable Lookup: A New Axis of Sparsity for Large Language Models

In this post, we explore the innovative concepts presented in the paper "Conditional Memory via Scalable Lookup: A New Axis of Sparsity for Large Language Models" by leading researchers at DeepSeek. Discover how memory techniques can transform the way large language models (LLMs) access and utilize information, making them more efficient and effective in a variety of tasks.

We'll delve into the architecture that allows models to bypass traditional computation-heavy methods and instead leverage a more streamlined approach. Learn how these advancements can enhance both the speed and accuracy of AI reasoning while reducing the cognitive load on models.

📌 What You'll Learn:

• 🧠 The importance of transitioning from "thinking" to "remembering" in AI

• 🔍 How engrams serve as memory aids in LLMs

• 📊 The impact of context-aware gating on model performance

• 🏗️ Strategies for combining conditional computation with memory

• 🚀 The future trajectory of conditional memory in next-gen models

Conditional Memory via Scalable Lookup: A New Axis of Sparsity for Large Language Models

https://arxiv.org/pdf/2601.07372

Xin Cheng Wangding Zeng, Damai Dai, Qinyu Chen, Bingxuan Wang, Zhenda Xie, Kezhao Huang, Xingkai Yu, Zhewen Hao, Yukun Li, Han Zhang, Huishuai Zhang, Dongyan Zhao, Wenfeng Liang

Peking University, DeepSeek-AI

{zhanghuishuai, zhaody}@pku.edu.cn

{chengxin, zengwangding, damai.dai}@deepseek.com

An Explainer Video:

A Gentle Slide Deck:

Let's Dive In...

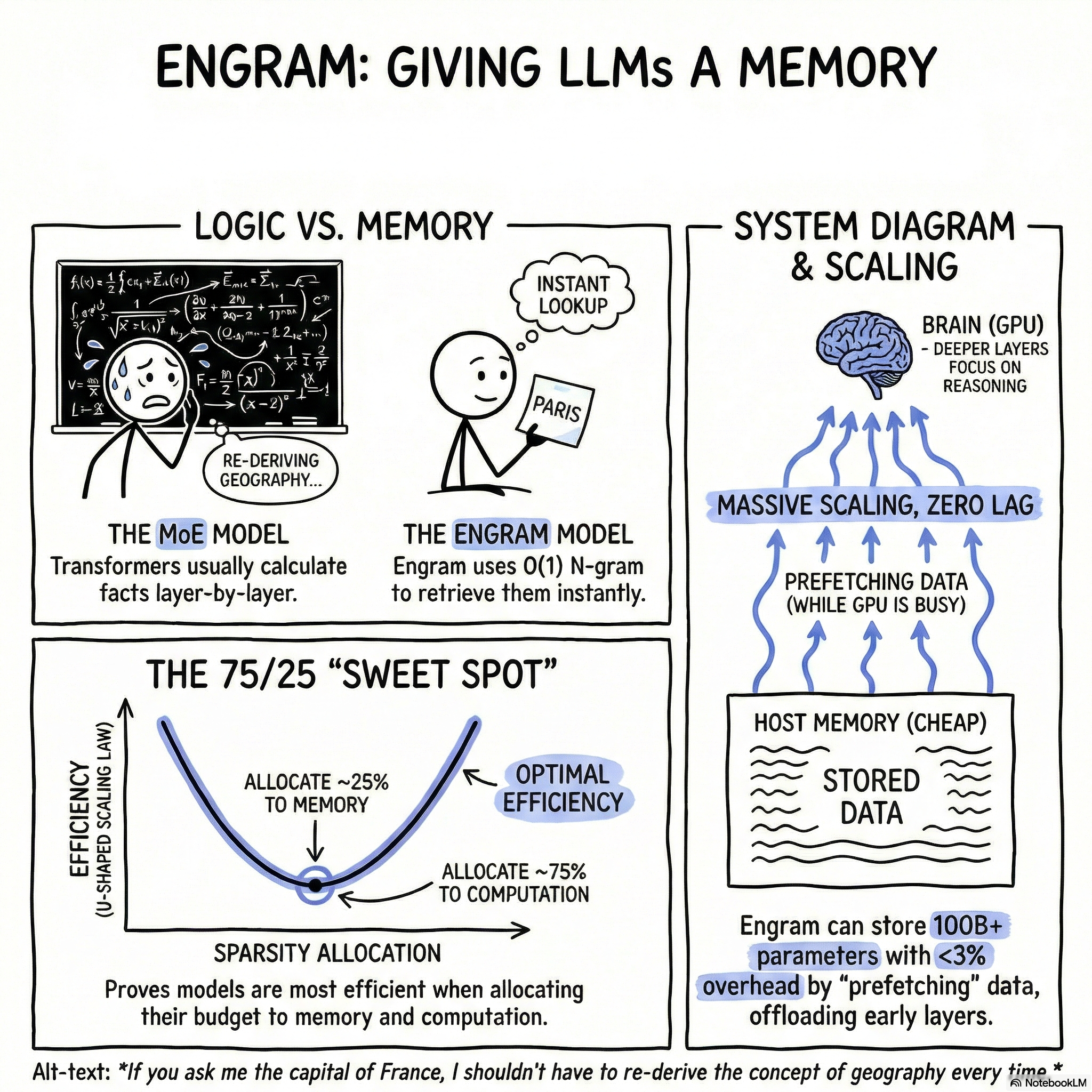

1. The Architectural Duality of Language: Computation vs. Retrieval

The current dominance of Mixture-of-Experts (MoE) architectures marks a milestone in scaling Large Language Models (LLMs) through conditional computation. However, an intrinsic architectural mismatch persists: standard Transformers lack a native primitive for knowledge lookup, forcing them to inefficiently simulate retrieval through sequential computation. This "Simulation Inefficiency" extracts a high computational tax; the model is forced to expend its most expensive resources—multi-layer Attention and Feed-Forward Networks (FFNs)—merely to reconstruct static, stereotyped entities. Empirical evidence (e.g., Table 3 in the source) demonstrates that resolving a specific entity like "Diana, Princess of Wales" requires the model to consume layers 1 through 6 to progressively aggregate features that could be addressed by a simple O(1) lookup.

Linguistic Duality and Resource Allocation:

- Compositional Reasoning (Dynamic): Demands deep, non-linear hidden state transformations to handle novel logic, syntactic synthesis, and multi-step inference.

- Linguistic Stereotypes (Static): Includes named entities, formulaic idioms, and N-gram regularities. These are local, fixed, and naturally represented as computationally inexpensive lookups.

The strategic "So What?" is clear: by forcing the network to utilize early layers for reconstructing static knowledge, we diminish the effective depth available for complex reasoning. Every layer sequestered for factual reconstruction is a layer lost for mathematical derivation or code synthesis. Engram modernizes N-gram embeddings into a first-class modeling primitive, introducing conditional memory as a secondary axis of sparsity to resolve this fundamental inefficiency.

--------------------------------------------------------------------------------

2. The Engram Module: Sparse Retrieval and Context-Aware Fusion

The Engram module decouples parameter storage from dynamic hidden states, ensuring the Transformer backbone remains dedicated to high-level computation while offloading factual density to a sparse memory hierarchy.

The Retrieval Phase

The process begins with Tokenizer Compression, utilizing a surjective mapping function (NFKC normalization and lowercasing) to collapse raw tokens into canonical identifiers. This process yields a 23% reduction in effective vocabulary size, maximizing semantic density. To manage the combinatorial explosion of N-gram space (default \{2, 3\}-grams), we employ Multi-Head Hashing. For each order n, K distinct heads utilize a lightweight multiplicative-XOR hash to map contexts to indices within massive embedding tables. This deterministic addressing allows for O(1) lookup efficiency while mitigating collision noise.

The Fusion Phase

Retrieved embeddings e_t act as context-independent priors but must be modulated to resolve polysemy and hash collisions. We employ a Context-aware Gating mechanism where the hidden state h_t acts as a Query.

The Gating Equation: \alpha_t = \sigma \left( \frac{RMSNorm(h_t)^\top RMSNorm(k_t)}{\sqrt{d}} \right) Where \alpha_t is a scalar gate that enforces semantic alignment, suppressing retrieved memory that contradicts the current latent context.

Integration Strategy

Engram is optimized for multi-branch (mHC) architectures. To maximize efficiency, the Value projection matrix W_V is shared across all branches, while Key matrices W_K^{(m)} are branch-specific. This allows for fine-grained, branch-specific gating while ensuring that all linear projections can be fused into a single, high-throughput dense FP8 matrix operation, maintaining optimal GPU utilization.

--------------------------------------------------------------------------------

3. The Sparsity Allocation Problem and Scaling Laws

A primary challenge in architecting sparse systems is the Sparsity Allocation problem: the optimal division of a fixed parameter budget (P_{tot}) and FLOP budget (P_{act}) between experts (computation) and memory. We define \rho \in [0, 1] as the fraction of inactive parameters allocated to MoE experts.

Empirical analysis reveals a robust U-Shaped Scaling Law. A pure MoE approach (\rho = 1) is consistently suboptimal. In the 10B parameter regime, reallocating 20%–25% of the sparse budget to Engram memory reduces validation loss from 1.7248 to 1.7109.

Allocation Ratio (\rho) | Configuration Strategy | Performance Delta (Val Loss) |

\rho = 1.0 | Pure MoE | Baseline Suboptimal |

\rho = 0.75 - 0.80 | Optimal Engram-MoE Hybrid | -0.0139 (Peak Efficiency) |

\rho \to 0 | Memory-Heavy | Logic Degradation |

In the "Infinite Memory Regime," Engram exhibits a strict log-linear trend as memory slots scale to 10^7. Unlike the "OverEncoding" baseline, Engram unlocks significantly higher scaling potential for the same memory budget, providing a predictable knob for capacity expansion without increasing training FLOPs.

--------------------------------------------------------------------------------

4. Empirical Evaluation: Beyond Factual Retrieval

Engram was validated through a 262B token pre-training curriculum comparing Dense-4B, MoE-27B, Engram-27B, and Engram-40B. Crucially, Engram-27B was tested against a strictly iso-parameter and iso-FLOPs MoE baseline.

Category | Benchmark | MoE-27B | Engram-27B | Delta |

Knowledge | MMLU (5-shot) | 57.4 | 60.4 | +3.0 |

CMMLU (5-shot) | 57.9 | 61.9 | +4.0 | |

Reasoning | BBH (3-shot) | 50.9 | 55.9 | +5.0 |

ARC-Challenge | 70.1 | 73.8 | +3.7 | |

Code/Math | HumanEval | 37.8 | 40.8 | +3.0 |

MATH (4-shot) | 28.3 | 30.7 | +2.4 |

The "surprising" gains in reasoning (BBH +5.0) and math (MATH +2.4) confirm that Engram’s primary value is not merely "knowing more," but rather "freeing the backbone." By offloading the burden of facts, the model utilizes its parameters more effectively for the structural logic required in high-reasoning tasks.

--------------------------------------------------------------------------------

5. Global Context Expansion and Attention Offloading

Delegating local syntactic and N-gram dependencies to lookups preserves Attention Capacity for global context. We evaluated this through Long-Context Extension Training (32k context) using the RULER (32k) benchmarks.

Key Findings:

- Multi-Query NIAH: Engram-27B achieved 97.0 accuracy vs. 84.2 for the MoE baseline.

- Variable Tracking: Engram-27B reached 89.0 vs. 77.0 for the baseline.

- Iso-Loss Superiority: In an Iso-Loss setting (Engram-27B at 46k steps vs. MoE-27B at 50k steps), the Engram architecture significantly outperformed the baseline, proving that its long-context advantage is structural rather than a byproduct of lower pre-training loss.

- Extreme Efficiency: An early-stopped Engram-27B (41k steps) matched a fully trained MoE baseline on LongPPL, matching performance with only ~82% of the compute.

--------------------------------------------------------------------------------

6. Mechanistic Deep Dive: The Effective Depth Hypothesis

We investigated the internal representations of Engram using LogitLens and Centered Kernel Alignment (CKA) with the Few-NERD dataset and an unbiased HSIC (Hilbert-Schmidt Independence Criterion) estimator.

- Accelerated Prediction Convergence: LogitLens analysis shows that Engram models maintain consistently lower KL divergence in early layers. This indicates the model reaches a "prediction-ready" state much faster than the MoE baseline.

- Representational Alignment: CKA heatmaps reveal that Layer 5 of Engram-27B aligns with Layer 12 of MoE-27B. This effectively "deepens" the network by seven layers without adding the sequential compute cost of additional Transformer blocks.

- Functional Specialization: Post-hoc ablation (removing Engram during inference) reveals a sharp dichotomy: factual knowledge collapses (TriviaQA: 29% retention), while context-grounded reasoning remains intact (C3: 93% retention). This proves Engram acts as the primary repository for parametric knowledge, leaving the backbone to specialize in reasoning.

--------------------------------------------------------------------------------

7. Infrastructure-Aware Efficiency: Prefetching and Offloading

The viability of conditional memory relies on hardware-algorithm co-design. Unlike MoE routing, which is dynamic and state-dependent, Engram IDs are deterministic—calculated as soon as input tokens are received.

This enables a Prefetch-and-Overlap strategy. Using the nano-vLLM engine on NVIDIA H800 hardware, we trigger asynchronous host-to-device transfers (PCIe) for embeddings while the GPU processes preceding blocks.

Inference Throughput (100B Parameter Table Offloaded to Host DRAM):

Base Model | Baseline (tok/s) | w/ Engram (tok/s) | Penalty |

4B-Dense | 9,031.6 | 8,858.3 | 1.9% |

8B-Dense | 6,315.5 | 6,140.0 | 2.8% |

The throughput penalty is negligible (<3%). By exploiting the Zipfian distribution of language, a Multi-Level Cache Hierarchy can keep high-frequency embeddings in HBM while offloading the long tail to NVMe, future-proofing massive parameter expansion.

Conclusion: Conditional memory is an indispensable modeling primitive. By decoupling static retrieval from dynamic computation, Engram provides a scalable, infrastructure-aware axis of sparsity that allows next-generation models to achieve unprecedented effective depth and reasoning capacity.

Fin...