Solving Catastrophic Forgetting via Self-Distillation Fine-Tuning (SDFT)

Self-Distillation Enables Continual Learning

https://arxiv.org/pdf/2601.19897

Idan Shenfeld, MIT; Mehul Damani, MIT; Jonas Hübotter, ETH Zurich; Pulkit Agrawal, MIT

In this engaging post, we delve into the innovative research paper "Self-Distillation Enables Continual Learning" by the talented team from MIT, Improbable AI Lab, and ETH Zurich. Discover how self-distillation fine-tuning (SDFT) offers a powerful solution to the static model problem, allowing AI systems to learn new skills while retaining existing knowledge.

We explore the limitations of traditional supervised fine-tuning (SFT) and the phenomenon of catastrophic forgetting. Learn how self-distillation transforms the learning process by enabling models to teach themselves. This comprehensive breakdown will enhance your understanding of continual learning and its implications for AI development. 🌟

📌 What You'll Learn:

• The static model problem and its implications for AI training

• How self-distillation fine-tuning (SDFT) overcomes limitations of supervised fine-tuning

• The mechanics of the distillation loop and its impact on continual learning

• Key results demonstrating SDFT's advantages over traditional methods

An Explainer Video:

A Gentle Slide Deck:

Let's Dive In...

1. The Critical Bottleneck in Model Evolution

In the current landscape of foundation model deployment, Large Language Models (LLMs) are largely treated as "point-in-time artifacts." Once training is finalized, their internal parameters remain static, and adaptation is relegated to inference-time interventions such as Retrieval-Augmented Generation (RAG) or In-Context Learning (ICL). This creates a significant strategic bottleneck and an escalating Total Cost of Ownership (TCO), as any meaningful integration of new domain knowledge or emergent skills necessitates expensive, full-scale retraining cycles to avoid state-space drift. Moving toward a "Dynamic Knowledge Asset" model—where systems learn perpetually—is the next frontier in AI architecture.

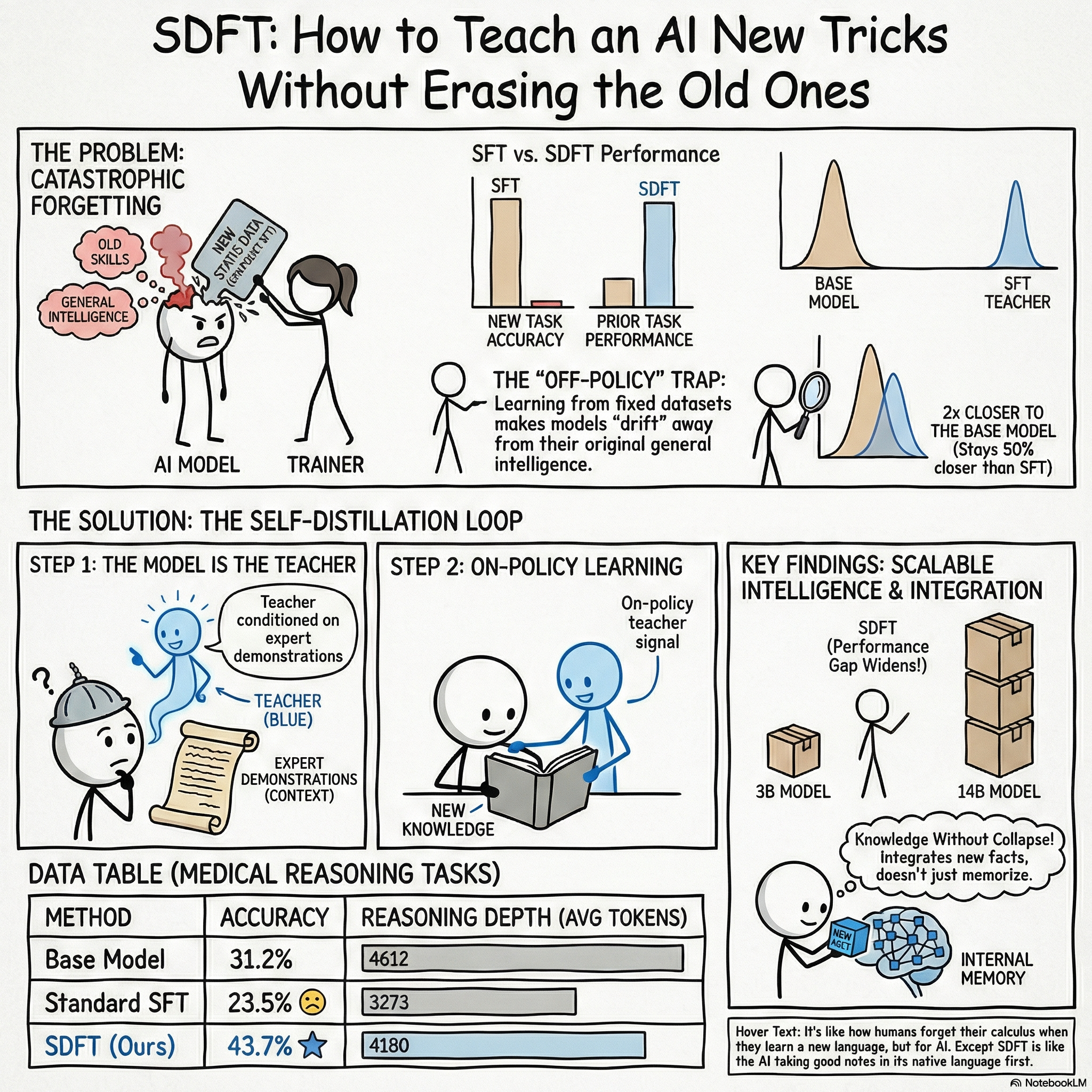

The primary obstacle to this evolution is the fragility of parameter updates. Standard Supervised Fine-Tuning (SFT) is fundamentally flawed for long-term lifecycle management because it is inherently off-policy. By training a model to imitate fixed, offline expert demonstrations, SFT forces the policy into states that may not align with its current distributional behavior, leading to compounding errors at inference time. This failure manifests as "Catastrophic Forgetting," where the acquisition of a new skill (e.g., specialized tool use) imposes a "forgetting tax" on general capabilities. Empirically, we see general reasoning performance drop from a 65% baseline to as low as 53% following sequential SFT. Self-Distillation Fine-Tuning (SDFT) resolves this by shifting the paradigm from offline imitation to on-policy distillation, utilizing the model’s own emergent capabilities as the supervisory signal.

2. Architectural Framework: The Dual-Role Self-Distillation Mechanism

SDFT identifies that a sufficiently scaled model’s ICL ability is a more efficient and reliable path to supervision than external reward functions, which are often noisy or difficult to engineer. By leveraging "context distillation," we can induce a "wiser" teacher state without requiring a separate, larger model. This creates a closed-loop learning environment where the model corrects its own trajectory errors.

The architecture employs a "Student-Teacher" configuration using the same model weights. The Student responds to a query x based on its current parameters. The Teacher is the same model, but its behavior is modified by conditioning it on an expert demonstration c using a specific prompt designed to prevent verbatim copying: "Now answer with a response of your own, including the thinking process." This logic ensures the teacher extracts the underlying intent and reasoning of the demonstration rather than acting as a simple copy-paste mechanism.

Feature | Student Role (P) | Teacher Role (Q) |

Conditioning | Task Query (x) | Query (x) + Expert Demonstration (c) |

Output Distribution | $P = \pi(\cdot | x)$ |

Weight Parameters | Active training weights (\theta) | Exponential Moving Average (EMA) of \theta |

Goal | Minimize Reverse KL to Q | Provide "wiser" on-policy signal |

This dual-role setup relies on the In-Context Assumption (\pi^*_{k+1} \approx \pi(y|x, c)), which posits that the behavioral shift induced by a demonstration accurately approximates the optimal next-step policy. By tracking progress via an EMA teacher, the system stabilizes the learning signal and avoids the divergence common in self-imitation loops.

3. Mathematical Foundations: SDFT as Implicit Inverse Reinforcement Learning (IRL)

To transition from black-box fine-tuning to controlled optimization, we must ground SDFT in reinforcement learning theory. SDFT is mathematically equivalent to maximizing an intrinsic reward function derived from the teacher’s preferences, effectively bypassing the Reward Identification Problem that typically plagues Inverse Reinforcement Learning (IRL). By using the model’s own ICL capabilities as the "optimal" policy \pi^*, we avoid the need for manual reward engineering or strong structural priors.

The objective minimizes the reverse Kullback-Leibler (KL) divergence between the student and teacher:

L(\theta) = D_{KL}(\pi_\theta(\cdot|x) || \pi(\cdot|x, c)) = E_{y \sim \pi_\theta(y|x)} \left[\log \frac{\pi_\theta(y|x)}{\pi(y|x, c)}\right]

This objective possesses a critical mode-seeking behavior. In reasoning tasks, reverse KL prevents the student from "averaging" across various possible answer distributions—a common cause of hallucination in SFT. Instead, it forces the student to commit to the high-probability "mode" established by the teacher's reasoning path.

The token-level reward mechanism (r_t) is defined as the immediate log-probability change: r_t(y_t | y_{<t}, x, c) = \log \pi(y_t | y_{<t}, x, c) - \log \pi_k(y_t | y_{<t}, x)

Furthermore, this setup provides superior trust-region adherence. Because the teacher is an EMA-informed version of the model itself, the student distribution remains anchored to the base model. Empirical data shows a teacher proximity of 0.68 nats for SDFT compared to 1.26 nats for SFT, ensuring the policy does not drift into "unseen states" that trigger compounding errors.

4. Operational Advantages for Knowledge and Skill Acquisition

SDFT offers a distinct advantage in operational efficiency, particularly when balancing training-time FLOPs against inference-time costs. While SDFT requires approximately 2.5x more FLOPs during training due to on-policy rollouts, it significantly reduces inference-time latency and context-window bloat compared to RAG-heavy architectures.

Operational Wins:

- Knowledge Integration: In Science Q&A (SciKnowEval), SDFT achieved 89% strict accuracy versus 80% for SFT. More importantly, it showed nearly perfect Out-of-Distribution (OOD) performance (98%), proving that facts are integrated into the weights rather than shallowly memorized.

- Prevention of Reasoning Collapse: A major risk in SFT is "Reasoning Collapse," where the model learns to provide final answers while skipping necessary cognitive steps. In tests with Olmo-3-7B-Think, SFT caused a drastic reduction in average response length from 4612 to 3273 tokens, corresponding to an accuracy drop. SDFT maintained a depth of 4180 tokens and increased accuracy to 43.7%.

- Credit Assignment Density: By providing supervision at the token level (using the analytic per-token KL estimator) rather than the trajectory level, SDFT provides a denser learning signal than standard RLHF/PPO, leading to faster convergence for complex skills like Tool Use (ToolAlpaca).

5. Evaluating the Pareto Efficiency of Continual Learning

The "Stability-Plasticity Dilemma" is the primary hurdle in foundation model development. Measuring new-task accuracy in isolation is deceptive; true efficiency is found in the Pareto frontier where new skills are acquired without degrading the existing core.

Model Variant | Prior Tasks Performance (Avg) | New Task Accuracy (Tool Use) |

Base Model (Qwen 2.5-7B) | 65.5% | 42.9% |

SFT (Baseline) | 56.0% | 63.2% |

SDFT (Ours) | 65.4% | 70.6% |

The "Scaling Floor" and Cumulative Stability: The efficacy of SDFT is intrinsically tied to model scale. Our research identifies a "capability floor": at the 3B parameter scale, SDFT actually underperforms SFT by a -3.3 gap because the model’s ICL is too weak to provide a high-quality teacher signal. However, the advantage turns positive at 7B (+4.0) and widens to +6.9 at 14B. For organizations using mid-to-large-scale models, SDFT represents a compounding asset. Sequential learning experiments (Figure 3) further prove that SDFT enables the accumulation of multiple distinct skills (Science, Tool Use, Medical) without the oscillatory interference and "forgetting spikes" seen in SFT.

6. Implementation Strategies and Strategic Limitations

Implementing SDFT requires a shift in the ML pipeline from static data loading to dynamic rollout generation.

- Teacher EMA: An EMA of student parameters (\alpha \approx 0.01-0.05) is mandatory. Using a frozen base model fails to capture learning progress, while using the "live" student induces high-variance feedback loops that lead to divergence.

- Analytic KL Estimator: For production stability, we recommend the "full analytic per-token estimator." While theoretically biased at the sequence level, it offers significantly lower variance than sample-based Monte-Carlo estimators, ensuring stable gradient updates during the reverse KL minimization.

- Loss Masking: To prevent the student from inheriting spurious "teacher-speak" (e.g., "Based on the provided example..."), early-token loss masking must be implemented on the first few tokens of the response.

Limitations: The primary constraint is the requirement for a base model with mature ICL capabilities. SDFT is also not a "magic bullet" for fundamental stylistic shifts; it excels at acquiring skills within the model’s existing reasoning framework but struggles to force a non-reasoning model to adopt complex chain-of-thought patterns.

7. Strategic Summary: The Path to Perpetual Learning

SDFT marks the transition from viewing LLMs as static artifacts to seeing them as evolving systems. By leveraging on-policy distillation, we can finally bypass the "forgetting tax" that has historically hindered model adaptation and restricted the utility of fine-tuning in production.

Strategic Implications for Leadership:

- Reliability: SDFT eliminates the catastrophic forgetting that destabilizes model updates, ensuring that new domain expertise does not degrade general reasoning.

- Scalability: The method’s ROI compounds with model scale; as your models grow, the "internal teacher" becomes more effective, increasing the efficiency of every training dollar.

- Future-Proofing: By reducing the dependency on expensive, reasoning-labeled data and explicit rewards, SDFT provides a practical path for models to learn from unstructured user demonstrations and raw interactions.

On-policy distillation via SDFT is the most viable path currently available for achieving true, lifelong learning from demonstrations in large-scale AI systems.

Fin..